Data Crunch

500 hours of movies. 50,000 trees made into paper and printed. 200,000 five-minute songs. These are just a few examples of the amount of digital data equivalent to one terabyte. Pretty impressive, right?

Now consider that in additive manufacturing (AM), the process-monitored build of one modestly sized part can also generate as much as a terabyte of data. That’s just a single part in a potential production run of hundreds or thousands of parts. Do the math, and you can see that we’re talking about massive amounts of information.

As AM continues to evolve and expand in its applications, this flood of ones and zeroes will only continue to increase. Two key data-related questions are currently on the minds of many people in the AM community. How do the companies implementing this uniquely flexible technology actually use all the data they’re accumulating? And perhaps more importantly: How do they keep it secure?

As it turns out, members of ASTM International’s committee on additive manufacturing (F42) are hard at work on standards that will help the industry address these issues.

Big Data

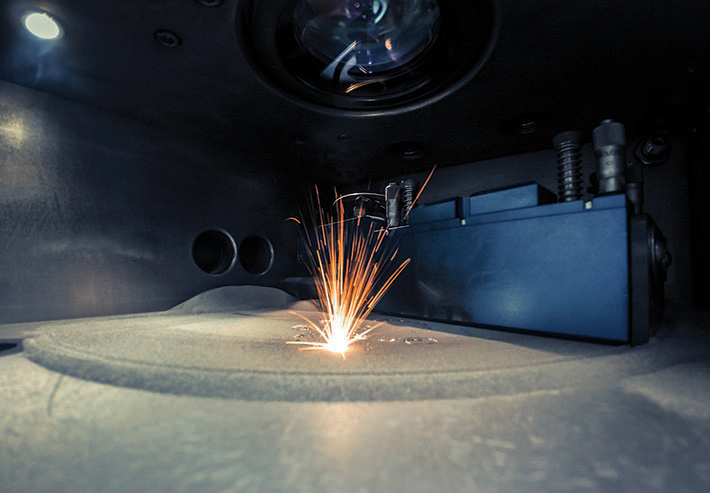

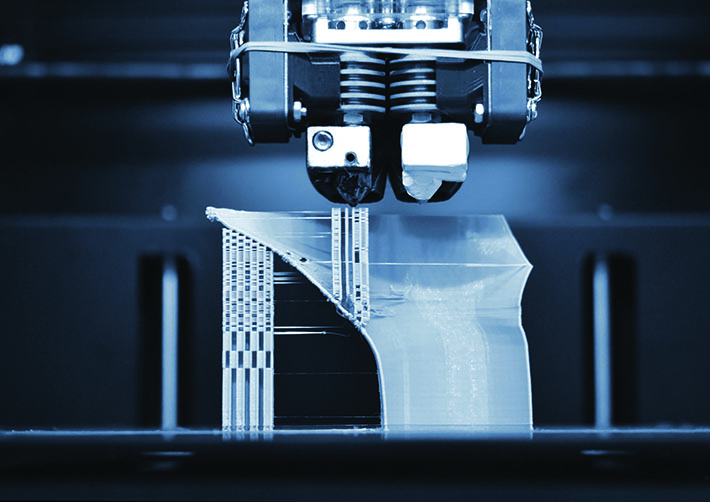

Additive manufacturing (AM) is a relatively new technology that builds three-dimensional objects by depositing successive layers of material atop one another, based on the desired geometry defined by a computer file. (It’s the opposite of more traditional, subtractive manufacturing, which starts with a solid piece of material that is shaped into its final form by cutting, grinding, or other mechanical forces.)

FOR YOU: The Next Industrial Revolution

One of the defining characteristics of AM is the lightning-fast interaction between the digital (computer files) and physical (actuators such as motors, lasers, etc.) aspects of its operation. In fact, the seamless electronic connectivity that enables process monitoring of AM operations is the primary driver of the data-management challenge.

“The massive amounts of data generated are essentially due to the multi-scale nature of AM, and the need to resolve very small and fast physics over large build volumes and long build durations,” says Brandon Lane, Ph.D., a member of the subcommittee on data (F42.08) who is also leading the “Metrology for Real Time Monitoring of Additive Manufacturing” project at the U.S. National Institute of Standards and Technology (NIST). He offers an example to illustrate how the numbers can add up so quickly.

“Stringent requirements on dimensional tolerance and surface finish of AM parts have pushed AM machines to utilize heat sources like focused laser and electron beam at the scale of tens of micrometers, the same scale where much of the core fabrication process physics occur,” he explains. “However, AM part designs are often on the hundreds of millimeters or more. Conceptually, if you divide a 100-mm cube volume into 10-micrometer ‘pieces,’ you end up with one trillion pieces. If each piece has one byte of data associated with it, you have one terabyte of data.”

Lane notes that similar hypothetical calculations can be done in the time domain. Process physics might require a sensor to acquire data at a sampling rate upwards of 100,000 samples per second — and many AM builds take more than 24 hours to complete. “Compound this with the fact that more than one process-monitoring technology is often used, and the volume of collected data grows immensely,” he says.

The Five Vs

As sizeable as it is, remarkably, AM process data only represents a drop in the bucket of what is often referred to as “big data” — the truly staggering amount of information generated globally across all segments (consumer, scientific, commercial/industrial, etc.) by online social media services, the “Internet of Things” (connected physical “smart” devices such as ome appliances and vehicles), and more widespread implementation of machine learning systems.

The quest to maximize the usefulness of big data is reflected in the so-called “Five Vs”: volume, velocity, variety, veracity, and value. It’s this last one that AM practitioners are particularly focused on as they look for ways to exploit the value of their specific datasets by developing standardized file formats, data structures, metadata definitions, and database schema.

“Common practice is to collect and store all the raw sensor data with the goal or hope that a good metric or data feature can eventually be identified,” Lane says. “Here, ‘good’ means that metric is highly correlated to the fabrication quality or some as-built part quality of interest in the final product.” He adds that most current AM process-monitoring protocols, rather than measuring an absolute metric such as temperature or pore density, establish a metric that is related to some final part quality or the machine fabrication performance.

“For example, a melt-pool monitoring instrument might process the raw sensor data into some metric related to melt-pool ‘intensity’ that is fundamentally related to real physical metrics such as melt-pool size and temperature,” Lane states. “But if this ‘intensity’ metric can be correlated to fabrication qualities such as pore formation, overheating, or thermal heterogeneity, it may not need to be directly related to melt-pool size or temperature. ‘Intensity’ in this case is not an absolute metric and doesn’t have real-world units.

An Important Step Forward

The standard guide for in-process monitoring using optical and thermal methods for laser powder bed fusion (E3353), approved earlier this year, is an important step toward maximizing the value of AM data. Lane points out that E3353 includes explanations of different ways in-process monitoring data might be used, categorized two ways: production versus development, and inspection versus control.

“‘Production’ implies the monitoring data is used during manufacturing to predict or evaluate the quality of the fabrication process or the final part,” Lane says. “This is really the primary goal of AM process monitoring: to be able to correlate some in-process measured signature that can predict the occurrence or formation of a defect in the part.”

“Development” in this context refers to the use of monitoring data during the early phases of an AM process design. Technical requirements here would be a bit looser than production since the data isn’t necessarily being used for quality control of an actual product. “For example, a company developing machine parameters like laser power or scan speed for a new material might look at the monitoring data to identify the range where the fabrication is stable/unstable, good/bad, etc.,” Lane says.

In manufacturing, the build of one modestly sized part can create a terabyte of data.

In the realm of inspection versus control, the former posits the use of in-process monitoring in the same manner as nondestructive testing or other part quality-assurance measurements, where the goal is to determine if a part is acceptable or not based on the identification of some critical defect.

Control, on the other hand, relates to the use of monitoring data to track how well the machine itself is performing. “Production engineers might identify some in-process sensor response limits based on what was recorded during fabrication of a number of ‘good’ parts,” Lane says. That same sensor can then be used while monitoring subsequent builds to identify problems and, if necessary, scrap the build or take some other corrective action.

Each of the four scenarios outlined here can require multiple builds and tests to establish a statistical basis for comparison of process-monitoring data to control limits or formation of a critical defect. And each iteration can result in terabytes of raw data that will need to be stored, transferred, and eventually analyzed.

Data Registration

E3353 is approved and ready to be used by those seeking to better manage and utilize their AM datasets. Standards in other areas — such as acquisition and storage of AM process-monitoring data, metadata requirements, and visualization/representation — are still in progress.

“In-process data is very often represented or visualized in the same geometric form or shape as the part being fabricated,” Lane says. “Practitioners can flip, rotate, slice, or otherwise manipulate a digital representation of the AM part, sometimes referred to as a ‘digital twin,’ that is composed entirely of sensor data.”

Data registration is the process used to align the multi-modal sensor data from local coordinate systems to a global coordinate system. It is the focus of a joint effort by ASTM and the International Organization for Standardization (ISO) under the auspices of the F42 data subcommittee (F42.08). The two organizations are currently working together to develop the specification for additive manufacturing — general principles — registration of process-monitoring and quality-control data (WK73978). Shaw Feng, Ph.D., is chair of the working group.

“The variety and volume of data collected by measurement devices used to monitor AM processes and inspect fabricated AM parts have increased exponentially in recent years,” notes Feng, a mechanical engineer at NIST. “Each measurement device collects a unique data type, and a common, open method to register these different AM data types is needed so that their uses can be identified for downstream applications including qualifications, certifications, and data analytics.”

A standard that provides a procedure and methods to register AM data will enable operators to access validated data, allow data alignment and fusion for process monitoring and control, and facilitate detection of defects traceable to process parameters. As of late September, the AM data registration standard was moving through F42.08, with plans for parallel voting by the full committee F42 and ISO once subcommittee approval was secured.

Data Security

It is clear that making the most of all the data generated by AM part builds can get complicated. Keeping all this digital information secure is also a multi-faceted challenge.

“Additive manufacturing is a cyber-physical system,” explains Mark Yamploskiy, Ph.D., a professor at Auburn University who also leads the ASTM working group developing the guide for AM security — general principles — guidelines for AM security (WK78322). He defines this duality by distinguishing between AM’s purpose and functionality, which is to create physical objects, and the fact that the technology is directed and controlled by cyber components.

“Then you have signals that are going to actuators like motors, lasers, compressors, etc., that are doing the actual work in the physical domain,” Yamploskiy adds. “As a result of these complex interactions, data traveling back and forth across cyber- and physical-domain boundaries, you produce a part.”

Potential vulnerabilities exist in both the cyber and physical aspects of AM. “I can hack into your computer or your 3D printer,” Yamploskiy says. “Among other things, I could inject a malicious code into the software used in your 3D printer, or modify the slicer software on the computer. In the security community, we understand these types of cyberattacks pretty well. We know how to protect against them and are getting better and better, though we are still not 100% secure even after decades of work.”

But the cyber-physical interactions that are integral to AM are much less understood from a security standpoint. For instance, Yamploskiy points out that because the electrical signals sent to actuators are physical in nature, they can be measured and used in an attack.

READ MORE: Safer Connected Consumer Products

“Basically, I can measure the physical emanations of cyber-physical processes, record this information, and then analyze it. These emanations, which can be acoustic, electromagnetic, optical or power-related, are known as side channels,” Yamploskiy says. “Using this side-channel data, I can steal the 3D printer design.” In fact, he was part of a team that measured the power side channel during operation of a fused deposition modeling (FDM) 3D printer and, using just that data, was able to reconstruct the part to about 99% accuracy.

Theft of a part design is just one way the security of an AM system can be compromised. Rather than flat-out stealing, a nefarious entity — disgruntled employee, competitor, state actor — could sabotage the design. Or the actor could simply observe, learning trade secrets or seeing what a company does to gain a competitive advantage.

“If they are coming in low and slow, they are compromising a machine and then staying dormant and observing for a while the behavior of the systems they have compromised before making the next move,” Yamploskiy says. “They are trying to stay under the radar until they really want to conduct an attack. And at that point they have many options: attack in cyberspace, attack using the cyber-physical interactions or transitions, or attack in physical space.”

In addition to guidelines for AM security, Yamploskiy is also part of the group working on the guidelines for AM technical and intellectual property authentication and protection (WK76970). Work on an outline began in September, with team members planning to write individual sections for presentation to other ASTM committees with an interest in this topic.

The Work Continues

Several of the important ASTM initiatives surrounding AM and the massive quantities of data it generates have been examined here. But they are far from the only ones.

For example, the subcommittee on data is also working on a revision to the standard data dictionary and standards for data models and data exchange formats (F3490). “What we’re calling the Common Data Dictionary, or CDD, will include a high-level common-data model in the first revision of the standard,” notes Alex Kitt, Ph.D., F42.08 chair and director of data science at EWI. “This

will go a long way to making data interoperable and reusable.”

Another step forward is the practice for additive manufacturing — data — common exchange format for particle size analysis by light scattering (WK75158), which passed balloting in late summer and was being formatted to become a full standard at the time of this writing.

Clearly, much work remains to be done. But according to Kitt, the potential value of AM data is enormous, and still largely untapped. “There is an opportunity to use data to accelerate AM development and adoption, but this requires the development of standards,” he says. “It also requires that the community address the political, economic, social, and technical barriers to adopting those standards. How do you convince a company to adopt the standards developed if they’ve already have invested in their own internal standards? What’s the value they would get

from adopting?”

These and many other questions related to the AM data issue will be addressed through the dedicated work of the members of F42 and other ASTM committees. ■

Jack Maxwell is a freelance writer based in Westmont, N.J.

SN Home

SN Home Archive

Archive Advertisers

Advertisers Masthead

Masthead RateCard

RateCard Subscribe

Subscribe Email Editor

Email Editor